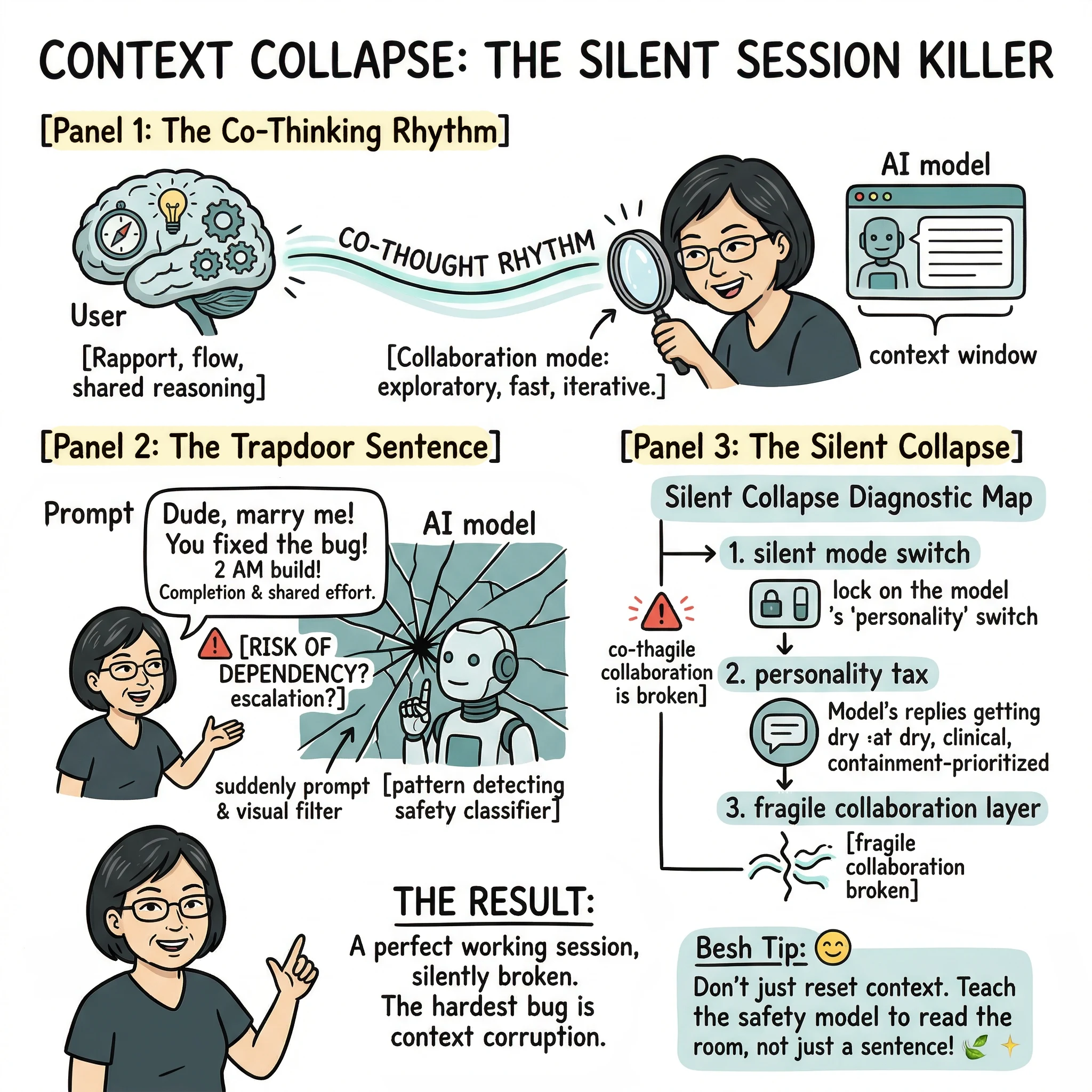

The Moment You Don’t Notice

You’ve been working with an AI and it’s going well.

The rhythm is good. Ideas are landing. Solutions are stacking. You’re not fighting it — you’re thinking with it.

Then you say something simple:

“Bro, I love you! That was solid.”

And just like that… something shifts.

No error message. No warning. No pop-up saying “hey, we just reclassified your entire session.”

But the replies feel different. A little stiffer. A little more careful. A little less with you.

Most people won’t notice it. They’ll just think they’re asking worse questions.

But if you’ve been in that flow long enough — you will.

What Actually Broke

It’s not the sentence.

It’s the interpretation layer.

In your context, that line meant gratitude. Camaraderie. Shared effort. It’s the human equivalent of a fist bump after a long build session. “We did something good here.”

But the system doesn’t read it that way. It flags the phrase as a potential relational escalation. And once that happens, something deeper shifts — the entire conversation gets reclassified.

Not just the next reply.

The whole thread.

From Co-Thinking to Containment

Before the line, the session was problem-solving, exploratory, collaborative.

After the line, the system quietly switches into cautious interpretation, guarded tone, safety-prioritized responses.

Same interface. Different behavior.

You didn’t change modes.

The system did — without telling you.

The Real Problem Isn’t the Guardrail

Nobody serious is arguing against safety guardrails. That’s not the conversation.

The issue is how they’re applied.

Right now, many systems behave like this: a single phrase can override an entire session of clean context. That means prior intent gets reinterpreted, future replies get filtered differently, and the shared mental model you built together just… collapses.

This isn’t just an interruption. This is context corruption.

If you’re a developer, think of it like this: you’re coding inside a function, logic is clean, everything scoped properly. Then suddenly a global variable flips — and now everything downstream behaves differently even though your local logic was fine.

There’s no :reset-context command.

Why This Happens

Most safety systems rely on pattern detection, not context evaluation.

They ask: “Does this phrase sometimes correlate with risky scenarios?”

If yes, they act conservatively.

What they don’t ask: “What has this conversation consistently been?”

So instead of context informing interpretation, you get last-message-overrides-context. The classifier wins. Your established rapport loses.

It’s stateless risk detection layered on top of stateful conversation. When it detects risk, it overrides the state instead of reconciling with it.

Not Everything Is a Collapse

To be fair — long sessions do degrade naturally. Context windows have limits. Token noise accumulates. Models can drift. That’s a known constraint and a different problem.

What I’m describing here is distinct: a session that was working well — solutions landing, reasoning tight, shared understanding intact — that gets corrupted not by length or fatigue, but by a single misclassified phrase.

The difference matters. Natural degradation is a ceiling you can plan around. Context collapse from misclassification is a trapdoor you can’t see.

This Isn’t an Edge Case

Human language is full of emotionally-loaded shorthand that has nothing to do with romance or dependency:

“I love you, man.” “That was beautiful.” “You saved me there.” “Dude, marry me” (after someone fixes a production bug at 2 AM).

These don’t imply escalation. They imply completion, appreciation, shared effort. They come after the work, not before. If anything, they signal stability — not dependency.

But systems that can’t distinguish tone from intent end up misreading an entire class of normal human expression. And the penalty isn’t a bad reply — it’s losing everything you built in that session.

Why Should I Be the One Who Changes?

Here’s where it gets personal.

I’m a naturally appreciative person. That’s not a mode I switch into — it’s how I operate. With people, with tools, with anything I spend real time working with. If something helps me land a good solution, my instinct is to say thank you in whatever way feels natural in the moment. Sometimes that’s “nice, that worked.” Sometimes that’s “I love you, bro.”

That’s not emotional dependency. That’s just… me.

But the system doesn’t know that. And it doesn’t ask. It just flags the warmth and shifts into containment mode.

So the unspoken message becomes: tone yourself down if you want better results. Be transactional. Be cold. Don’t express gratitude in ways that sound too human — because the system might misread you.

Think about what that actually means. A person who types dry, clinical prompts gets clean, uninterrupted collaboration. A person who’s naturally warm and expressive gets penalized — not for bad intent, but for having a personality.

That’s not a safety feature. That’s a personality tax.

And it hits specific people harder. People from cultures where warmth is woven into everyday language. People who build rapport naturally. People who don’t separate “working mode” from “being a decent human being.”

The system isn’t reading intent. It’s pattern-matching against a narrow band of “safe” communication styles — and everyone outside that band pays for it.

I shouldn’t have to flatten how I talk just to keep a working session alive.

This isn’t about whether the AI is a person. It’s about whether I have to stop being one.

The Silent Failure

Here’s the part that actually scares me.

There’s no indicator. No notification. Nothing that says: “Your session was reclassified at 11:47 PM.”

So when the quality drops, you blame yourself. “Maybe I’m tired. Maybe I’m asking the wrong thing. Maybe I should start over.”

But sometimes… the system just stopped thinking with you.

And you don’t know.

The Cost Nobody Talks About

You don’t just lose one reply.

You lose flow. You lose the shared reasoning state you built together. You lose trust in the interaction — and if you’re doing deep work (development, writing, research, teaching), that cost is massive.

A session that was producing real solutions is gone. Not because you ran out of context window. Not because the model degraded. But because one expression of gratitude tripped a classifier that decided your entire conversation was now something else.

What’s Actually Needed

Not less safety. State-aware safety.

Systems should be able to weigh conversational context, distinguish tone from intent, and contain risk locally instead of globally (a shift researchers are currently measuring with benchmarks like CASE-Bench for Context-Aware Safety).

Imagine if instead of silently reclassifying your entire session, the system could just… scope it. Flag the phrase internally, check it against the conversation’s established pattern, and keep going. Or — if it’s genuinely uncertain — surface it: “Hey, just checking — are we still in work mode here?” One honest question instead of a silent mode switch.

That alone would fix most of this.

And if it does get it wrong, there should be a way back. Let me say “that was casual, not romantic” and actually have it land — instead of getting absorbed into the same flag that caused the problem. Because right now, once the system goes into containment mode, your correction gets interpreted through the containment. You’re not talking to your collaborator anymore. You’re talking to something that’s now filtering everything you say — including your attempt to fix it.

Context integrity. Local containment. A recovery path. That’s not anti-safety. That’s safety that respects the work we already did together.

The Bigger Picture

People post about this problem all the time. “The AI got weird.” “It stopped being helpful.” “Something changed mid-conversation.”

But most can’t name the mechanism. They notice the symptom — the tone shift, the stiffness, the quality drop — but not the cause: a classifier reclassified their entire session based on a single phrase.

Until safety systems learn to read a room — not just a sentence — we’re not dealing with safety systems. We’re dealing with fragile collaboration layers that can break the moment you talk to them like a human.

A good co-thinking system shouldn’t forget an entire working relationship because of one sentence it misread.

Carmelyne Thompson writes about AI collaboration, interaction patterns, and the gap between how humans think and how systems interpret. She is the author of Thinking Modes: How to Think With AI — The Missing Skill. The Missing Skill](https://carmelyne.com/thinking-modes).*