Everyone’s vibe coding now. Your LinkedIn feed says so. The Twitter/X threads say so. The “I built a SaaS in a weekend with zero code” posts definitely say so.

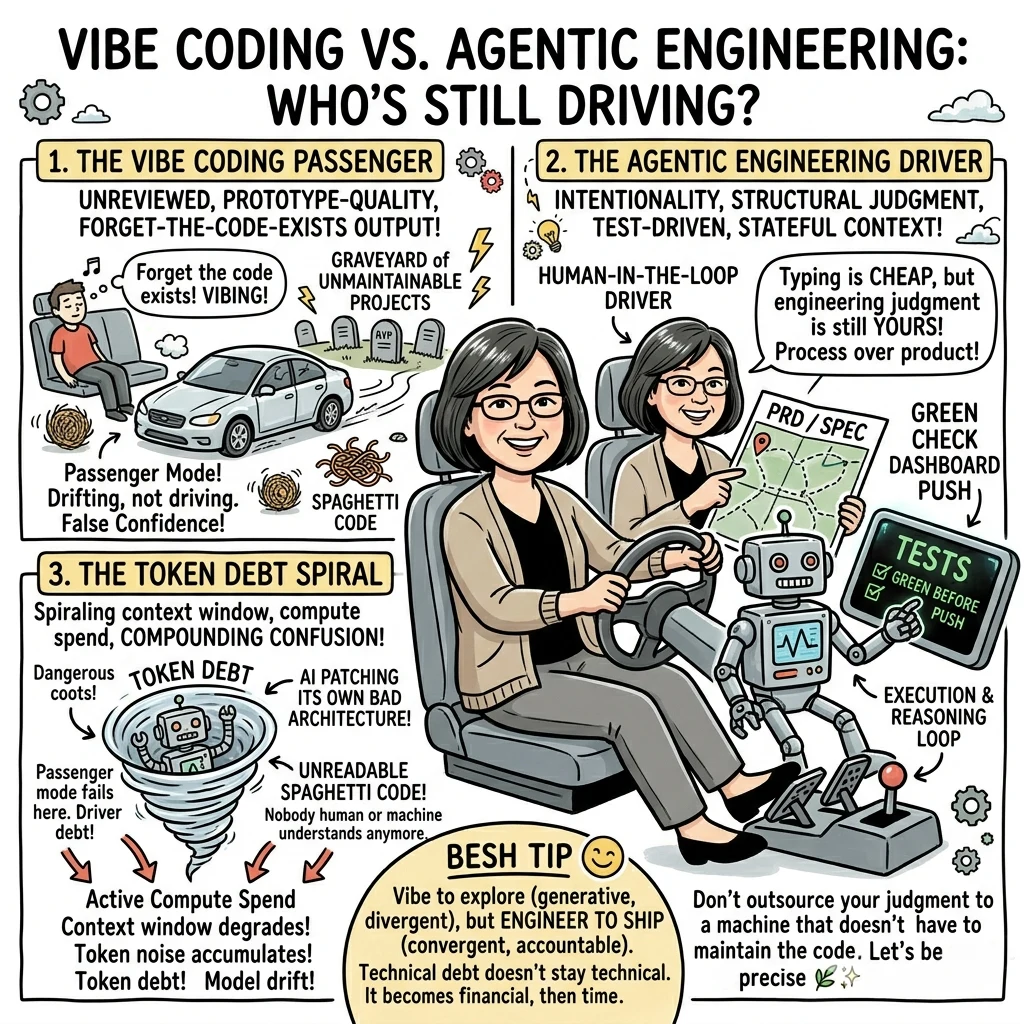

But here’s what nobody’s telling you: most of what gets called “vibe coding” is actually two very different things happening under the same name — and one of them ships production software, while the other creates a graveyard of unmaintainable projects and false confidence.

The difference has a name. And it matters more than most people realize.

First, Let’s Respect Where “Vibe Coding” Actually Came From

Andrej Karpathy coined the term in February 2025. His original description was specific: prompting LLMs to write code while you “forget that the code even exists.” Passenger mode. You describe what you want, the AI produces it, you don’t really look at what got made.

That’s a real and useful mode. For prototypes. For throwaway experiments. For “does this idea even work at all?” exploration. Karpathy wasn’t saying it was bad — he was naming a real behavior pattern that had emerged.

But then the internet did what the internet does: it expanded the definition to mean any AI-assisted coding. And now we’ve lost the distinction that matters most.

Simon Willison, who’s been building seriously with these tools longer than most, just published his Agentic Engineering Patterns guide and he’s explicit about this: vibe coding and agentic engineering are not the same thing, and conflating them is a mistake. We need vibe coding to keep meaning unreviewed, prototype-quality, forget-that-the-code-exists output — precisely so we have a word for what it’s not.

What Agentic Engineering Actually Means

Agentic engineering is what happens when you use coding agents — tools like Claude Code, OpenAI Codex, Gemini CLI — with intentionality. These agents write and execute code in a loop. They can iterate toward working software. That execution capability is what makes the whole thing different from autocomplete.

But here’s what Simon nails that most people miss: agents didn’t make the engineering judgment disappear. They just made the typing cheaper.

Code has historically been expensive to produce. A few hundred lines of clean, tested, production-ready code could easily take a skilled developer a full day. That constraint shaped everything — how we plan, how we estimate, what features get built, what gets skipped. Our entire professional intuition about software was calibrated to that cost.

Coding agents dropped the cost of typing code to near zero.

They did not drop the cost of good code.

Good code still means: it works, and you know it works. It handles errors gracefully. It’s tested. It’s documented. It won’t make future changes into a nightmare. All of that still requires a human who’s paying attention, making decisions, reviewing output, and maintaining standards.

Agentic engineering is still engineering. The agent is the vehicle. You’re still the driver.

What I Built to Prove This Point

I’ve been exploring this boundary in practice, not just theory, through an open-source project called Tindlekit.

Tindlekit is a lightweight, fork-friendly, token-based idea funding platform. Simple PHP backend, SQLite or MySQL, designed to deploy in 15 minutes on shared hosting. Anyone can fork it, customize it, build community with it.

The deliberately boring stack is the point. By keeping the technology dead simple, you isolate the skill that actually matters: how you drive the AI. There’s no Kubernetes complexity to hide behind, no framework magic to blame. If the architecture is bad, you made it bad. If it’s solid, you made it solid.

The Project Defines Its Own Levels

The Tindlekit docs explicitly distinguish between levels of working with AI:

- Level 1: Following tutorials, copying solutions. “Make this work.”

- Level 2 Vibe Coding: Understanding requirements, collaborating, shipping. “Make this matter.”

- Level 3+: Teaching others, building sustainable systems. “Make others successful.”

Notice what Level 2 is actually describing. It’s not “forget the code exists.” It’s: work from a PRD, pass context through structured documents, treat commits as signals not just code drops, collaborate around shared product thinking.

Here’s the thing — the moment you pick up a PRD, you’ve already stepped out of pure vibe mode. PRDs aren’t a vibe coding thing. They’re an engineering discipline thing. Calling it “Level 2 Vibe Coding” is a deliberate trojan horse: the vibe is the onramp that gets people in the door, the structure is what’s actually waiting inside. I love that framing precisely because it works on both levels — it tells newcomers “you belong here” while quietly installing the habits that matter.

The HOW_TO_VIBECODE.md Is Actually a Framework Doc

The project’s contribution guide sounds casual — “vibe rules,” “friendly commit messages,” “keep the vibe flowing” — but underneath it’s a structured 4-week development cycle:

- Week 1: Product immersion. Read the PRD before touching code. Understand the vision, not just the features.

- Week 2: MVP development from requirements. API endpoints, integration, testing the full user loop.

- Week 3: Production readiness. Deployment, security, performance, not just “it works on my machine.”

- Week 4: Community and sustainability. Documentation excellence, mentorship, contributing improvements back.

That’s not vibing. That’s an engineering process with intention dressed in accessible language. The playfulness is a feature — it lowers the barrier to entry — but the structure underneath is real.

The Contribution Model Is the Demonstration

This is the part I’m most proud of, and the part most people would miss.

Tindlekit’s CONTRIBUTORS.md credits human and AI contributors transparently and separately, with distinct contribution philosophies for each. Claude contributed documentation and deployment guides. GPT-4o worked on architecture and workflow docs. GPT-5 contributed prompt engineering guides.

And me — listed as “Human-in-the-Loop Driver.”

That’s not a courtesy title. In the project docs, that role is defined as: directing, reviewing, and approving all AI-generated outputs before merging; running tests and enforcing the “green before push” standard; maintaining alignment with the educational mission.

The AI agents generated. I decided what stayed.

That’s agentic engineering: agents doing the heavy lifting on execution while a human maintains judgment, intent, and standards.

The Diagnostic: Which Mode Are You Actually In?

Here’s a quick way to figure out where you actually are when you’re building with AI:

You’re vibe coding if:

- You can’t explain why the code does what it does

- You’re accepting outputs without reading them

- “It works” is your only test

- You couldn’t maintain or debug it without regenerating from scratch

- Your context is a chat window, not a document

You’re doing agentic engineering if:

- You have a PRD, a spec, or at minimum a clear mental model before prompting

- You review what gets generated and make decisions about it

- You’re running actual tests, not just “does it load”

- You’re tracking what the agent knows through structured context (CLAUDE.md, continuity docs, memory files)

- You could explain the architecture to another developer

The dangerous middle — and this is where most people actually are:

- You’re generating code fast and feel productive

- You’re not quite reading it carefully

- You’re not testing edge cases

- You’ve outsourced your engineering judgment without realizing it

If you recognized yourself in that list, here’s your one move: Before your next prompt session, write a one-page PRD. Not a spec document, not a technical design — just one page answering: what problem does this solve, who uses it, what does done actually look like, and what are the three things that must not break. That document becomes your context anchor. It’s what you hand the agent at the start of every session. It’s what you check your outputs against. It’s the difference between driving and drifting. Everything else follows from having that page.

This is where technical debt accelerates at AI speed. You’re getting the output volume of agentic engineering with the quality assurance of vibe coding. That combination will find you eventually.

There’s a specific failure mode I call Token Debt — and it’s worse than regular technical debt because it’s self-reinforcing. It goes like this: the agent generates bad architectural code early on. You don’t catch it because you’re in passenger mode. Later, something breaks. So you paste the broken code back into the agent and ask it to fix it. The agent patches it. Something else breaks because the patch didn’t understand the original problem. You paste that in too. Now you’re burning context window and API credits feeding an AI its own bad decisions, spiraling deeper into a codebase that nobody — human or machine — fully understands anymore.

Regular technical debt costs you future developer time. Token Debt costs you that plus active compute spend, plus context degradation, plus the compounding confusion of an agent that’s been reasoning from flawed premises across a long session. The meter is running while you dig the hole deeper.

The driver catches bad architecture at the foundation. The passenger discovers it three weeks later when the fix costs more than the original build.

The Mirage of Completeness

There’s a visual trick that vibe coding plays on you that agentic engineering doesn’t.

When you vibe-code a project, you often end up with something that looks finished. The UI is there. The buttons work. It looks like a product. But underneath, the error handling is thin or missing, the edge cases were never considered, the backend is doing exactly what the happy path needs and nothing else.

Paint without plumbing.

Agentic engineering is fundamentally about the plumbing — the parts nobody sees. The error states. The validation. The test coverage. The graceful degradation when something goes wrong at 2am. Tindlekit’s deployment guide has a whole troubleshooting section not because things always break, but because someone thought about what breaks and built recovery paths for it. That’s not vibe energy. That’s engineering.

The mirage is dangerous because it passes demos. It fools investors. It even fools the builder. Right up until a real user finds the edge case you never handled.

Why the Framing Matters for Where We’re Going

Simon Willison put it well: the industry is still figuring out the new habits that respond to what agentic engineering actually makes possible. The old instincts — “don’t build that, it’s not worth the time” — need to be re-evaluated constantly because the cost of trying dropped so dramatically.

But the instinct that must survive is: someone has to be accountable for what ships.

The Tindlekit philosophy calls this out directly: “process over product.” The journey of structured development matters more than any single outcome. Skills transfer to every future project. Specific tools become obsolete.

That’s not a soft sentiment. It’s a practical statement about compounding returns. An engineer who understands how to drive coding agents — who knows how to write a PRD that an agent can work from, how to structure context so the agent doesn’t drift, how to verify outputs, how to maintain quality standards — that engineer ships more, better, and sustainably.

An engineer who learned to vibe their way to a working prototype but never developed those structures will hit a wall. The wall looks like: a codebase nobody can maintain, features that break each other unpredictably, no way to onboard anyone else, and eventually a rewrite.

For solo founders and indie builders, that rewrite isn’t just a technical cost — it’s a runway cost. Every week you spend untangling vibe-coded spaghetti is a week you’re not shipping the next feature, not acquiring the next customer, not moving toward sustainability. Technical debt doesn’t stay technical. It becomes financial debt, then time debt, then the kind of exhaustion that makes you question whether the whole thing was worth starting. Agentic engineering isn’t about perfectionism. It’s about not burning your runway fixing decisions you made in passenger mode.

The Meta-Point: This Is a Thinking Mode

If you’ve read my book Thinking Modes: How to Think With AI, you’ll recognize the pattern here. Vibe coding is a valid thinking mode — generative, exploratory, low-friction. It’s excellent for divergent phases, for prototyping, for “let’s see what’s even possible.”

Agentic engineering is a different mode: convergent, intentional, accountable. You’re directing execution toward a defined standard.

But there’s a layer underneath both of those that most people never get to: metacognition. In agentic engineering, you’re not just thinking about the code. You’re thinking about how to tell the agent to think about the code. You’re writing prompts that encode your architectural values. You’re structuring context documents so the agent doesn’t drift from your intent. You’re reasoning about the agent’s reasoning. That’s a different cognitive level entirely — and it’s exactly what separates engineers who get compounding results from AI tools from those who plateau.

This is the skill that Thinking Modes is really about. Not which tool to use, but which cognitive mode you’re operating in — and whether you’re doing it consciously.

The mistake isn’t using vibe coding. The mistake is staying in vibe mode when you’ve crossed into a phase that requires engineering mode — and not noticing the transition happened.

The best builders I know move fluidly between both. They vibe to explore, then shift modes to build what survives contact with reality.

The question isn’t which mode is better. The question is: do you know which mode you’re in right now?

Build It Forward

Tindlekit was built to teach this exact progression. The whole project is scaffolded around helping developers move from Level 1 (copy-paste solutions) through Level 2 (product-driven, collaborative building) toward the kind of practice where you’re not just building things — you’re building people who can build things.

That’s the long game. That’s what agentic engineering enables when you’re actually driving.

The code is open source. Fork it. Break it. Rebuild it. That’s the point.

→ github.com/carmelyne/tindlekit

Frequently Asked Questions

What's the difference between vibe coding and agentic engineering?

Vibe coding — in its original definition from Andrej Karpathy — means prompting an AI to write code while you forget the code even exists. You describe what you want, the AI produces it, you don’t review it closely. It’s passenger mode: fast, low-friction, great for throwaway prototypes and exploration.

Agentic engineering is what happens when you use coding agents with intentionality and structure. You write a PRD before you prompt. You review what gets generated. You run real tests. You maintain architectural judgment. The agent does the execution; you stay in the driver’s seat. The distinction isn’t which tools you use — it’s whether you’re still driving.

Is vibe coding bad?

No. It’s a valid mode for the right phase. Vibe coding is excellent for divergent exploration — testing whether an idea is even feasible, generating a quick prototype to validate a concept, moving fast in the early “does this work at all?” stage.

The problem isn’t vibe coding. The problem is staying in vibe mode when the project has crossed into a phase that requires engineering judgment — and not noticing the transition happened. A prototype is fine as a prototype. It becomes a liability the moment you treat it as a foundation.

What is Token Debt?

Token Debt is a failure mode specific to AI-assisted development, and it’s worse than regular technical debt because it’s self-reinforcing.

It works like this: the agent generates bad architectural code early on. You don’t catch it because you’re in passenger mode. Something breaks later. You paste the broken code back into the agent and ask it to fix it. The agent patches it. Something else breaks because the patch didn’t understand the root problem. You paste that in too.

Now you’re burning context window and API credits feeding an AI its own bad decisions, spiraling deeper into a codebase that nobody — human or machine — fully understands anymore. Regular technical debt costs future developer time. Token Debt costs that plus active compute spend, plus context degradation, plus compounding model confusion across a long session.

The driver catches bad architecture at the foundation. The passenger discovers Token Debt three weeks later when fixing it costs more than the original build.

Do I need to know how to code to do agentic engineering?

You need enough technical literacy to review what the agent produces and recognize when something is wrong — not necessarily to write the code yourself. The judgment layer is what agentic engineering requires, not the typing layer.

That said, the less technical context you have, the more important your other structures become: a clear PRD, explicit success criteria, a defined test for “done.” Those documents become your review framework when you can’t evaluate the code line by line. Agentic engineering without technical background is harder but not impossible — it just means your context documents have to work even harder.

What is a PRD and why does it matter for AI coding?

PRD stands for Product Requirements Document. At its simplest it’s a written answer to four questions: what problem does this solve, who uses it, what does done actually look like, and what are the three things that must not break.

It matters for AI coding because agents have no memory between sessions and no inherent stake in your product vision. Without a PRD, every session starts from scratch and the agent fills in the gaps with its own assumptions — which may not match yours. With a PRD, you have a context anchor: something you hand the agent at the start of every session, check your outputs against, and use to catch drift before it becomes Token Debt.

The moment you write a PRD, you’ve already stepped out of pure vibe mode. PRDs are an engineering discipline, not a vibe coding thing.

What tools do agentic engineers actually use?

The core tools right now are coding agents that can both write and execute code in a loop: Claude Code, OpenAI Codex, and Gemini CLI are the main ones. The execution capability is what makes them different from a regular chatbot — they can iterate toward working software, not just output text.

Beyond the agents themselves, agentic engineering relies on structured context: CLAUDE.md or equivalent files that tell the agent about your project, continuity documents that carry state across sessions, and PRDs that anchor intent. The tools change fast; the discipline of structured context doesn’t.

My own open-source project Tindlekit is built to be a controlled learning environment for exactly this — simple stack, clear PRD, documented contribution philosophy. github.com/carmelyne/tindlekit

How do I know if I'm in the dangerous middle?

Ask yourself three questions right now:

One: Before your last prompt session, did you write down what “done” looks like?

Two: Did you actually read the code that came back — not just run it?

Three: Could you explain your current architecture to another developer without regenerating anything?

If the answer to all three is no, you’re in the dangerous middle. You’re getting the output volume of agentic engineering with the quality assurance of vibe coding. That combination will find you — usually at the worst possible time.

The one move to get out: write a one-page PRD before your next session. What problem does this solve. Who uses it. What does done look like. What three things must not break. That page is the difference between driving and drifting.

Can vibe coding and agentic engineering coexist in the same project?

Yes — and the best builders move fluidly between both. They vibe to explore, then shift modes to build what survives contact with reality.

A common pattern: vibe code a rough prototype to validate that the idea works at all, then stop, write a proper PRD based on what you learned, and rebuild the foundation with agentic engineering discipline. The prototype was never meant to survive — it was research. The rebuild is the product.

The mistake isn’t using vibe coding. The mistake is not knowing when to make the switch.

Carmelyne Thompson is a Filipino solopreneur, systems thinker, and builder with 25+ years in full-spectrum web development. She’s the author of Thinking Modes: How to Think With AI (available at carmelyne.com/Gumroad) and the creator of Terrakindle, an ecological global stewardship framework. She writes about human-AI collaboration for builders who are still driving.